If you prefer this as an article – you can download it here.

What is continuous improvement?

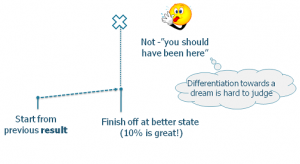

Continuous improvement always starts by observing previous results. That is our baseline for improvements forward on. We strive to improve steadily, a little at a time – 10% is great! But first step is always to accept the facts, regardless if we would have liked it to be better. It is way too easy to sweep failed projects under the carpet rather than used as a baseline for improvements forward on. A mistake easily made is to base improvements on dream targets rather than previous results, it is hard to learn something from failure to meet those targets.

The cornerstone of continuous improvement – standard work

The core component in Toyota’s improvement process is standard work – “Without standards, there can be no improvement”. The idea of standard work is to provide a steady base from which to take the next step. Without a base, improvement efforts are likely to be wasted since we’ll be rolling backwards as much as moving forward.

Standard work provides the baseline for our improvements

So, what is standard work?

Let’s look at an example.

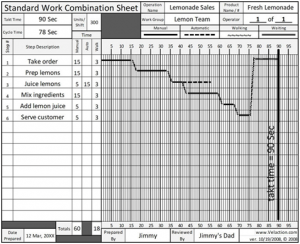

Standard work for making lemon juice

Standard work has of three components,

- Takt time (the time interval the factory has to deliver an item in order to match customer demand)

- WIP (nr of items to work on concurrently)

- The sequence of operations.

Using standard work makes it easy to verify an improvement idea. If the improvement produces a better result, for example a shorter timeline, it is thereby better and will be accepted as the new standard/baseline. Improvements can of course happen for more reasons, like reducing raw material cost, improving safety and so on. If the improvement can be proven to move a key indicator (time, quality, cost) – it will accepted. In manufacturing standard work helps ensure we continuously deliver a quality product, on time.

Seek perfection

Continuous improvement has no end destination. It is a direction, not a place. A mistake easily made is thinking we’ve reached a good level. That is not the point! The point is to seek perfection.

Roughly, continuous improvement follows the current steps:

4. Challenge – Seek perfection

3. Maintain – Learn to keep it

2. Achieve – Reach the level

1. Define – Set current standard

The hardest part by far in product development is “Maintain”. It is easy to abandon improvements because lack of patience or endurance to wait out the effects. For example, changing the way we do testing might need a full release cycle before we can see the effects. If you keep adding new changes before consolidating the learning of the last one, you’ll be spinning around like a guinea pig in a constant try/fail loop.

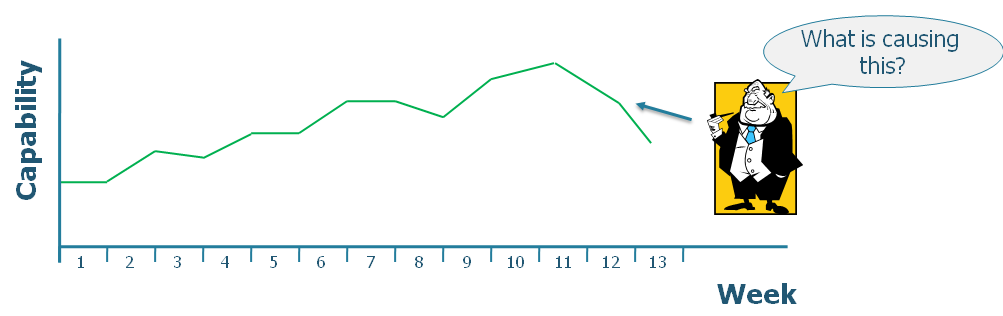

Maintaining requires questioning and acting when performance degrades. This is the natural role for management. If management doesn’t do this – what are they managing? It is tempting in the Agile world to hand off all this responsibility to the teams and step away too far. But until the teams are capable of self-adjusting when this happens we’d like to step in and ask questions when performance degrades. This is also a natural feedback loop to managers who actually controls many of the conditions in which the team operates. For example, if team members are switched around too frequently, we will surely find out the consequences.

What about continuous improvement in the product development world?

In product development our world is far from static. We deal with unexpected obstacles and fleeting opportunities. We solve multi variable problems in an uncharted terrain. So, we must ask, what makes a good baseline?

Choosing standard work as baseline?

Using standard work in product development is hard while you are still are faced with the design challenge to figure out what problems needs solving. As an example, when the world’s fastest airplane, the SR-71 Blackbird was created, engineers where faced with designing a hull of titanium. To build it, new tools and machinery had to be created to deal with the extraordinary durability of titanium. During rapid discovery the games changes so rapidly that standards are useless. More important factors at this stage are innovation, experimentation and flexibility.

Of course not all work in product development is rapid discovery. And for repetitive tasks, standard work makes great sense. It is just that in software, our approach to repetitive work is pragmatic – solve it using automation :). Continuous integration is a great example.

There is definitely more to discover around what role standard work can play in product development. For example, not all parts of a product are constantly changed. We standardize core components for reuse. This helps us shift our focus from parts where we are impact indifferent into parts where we choose to gain from differentiation. I think there is more to it in how standard work can be used. I’ll leave it to the reader to explore! 🙂

In product development, we have a replacement for standards – feedback loops.

Choosing the market impact as a baseline

What if we choose market feedback as a baseline for improvements? The information value would certainly be high. The challenge would be how and what to learn from it. Many factors influence the results, which means the signal to noise ratio will be high. This means it will be hard to correlate actions and outcome. For example: “Is the current user trend a result of the improvement we made, the marketing campaign launched last month or simply a coincidence?” – it’s hard to know. Market feedback is also generally slow, too slow to provide feedback at the rate we change and improve.

Choosing system output as the baseline

System output is the middle ground and it’s under scope of control. Examples of system output are lead time, quality defects per release, time between defects or similar. Let’s look at the upside of choosing system output as a baseline:

- It allows flexibility in the choice of solutions

- It contains learning not only about outcome, but also about the interaction of parts. For example, we might learn that it didn’t help that we refactored our code base if we still missed to configure the application correctly.

- It has the potential of providing feedback at an acceptable rate.

The downside is it will contain noise (the downside of dealing with interdependent parts). Thus, we will need to add some intelligence and thinking to analyze it in order to understand when to act and when not to.

Knowing when to act

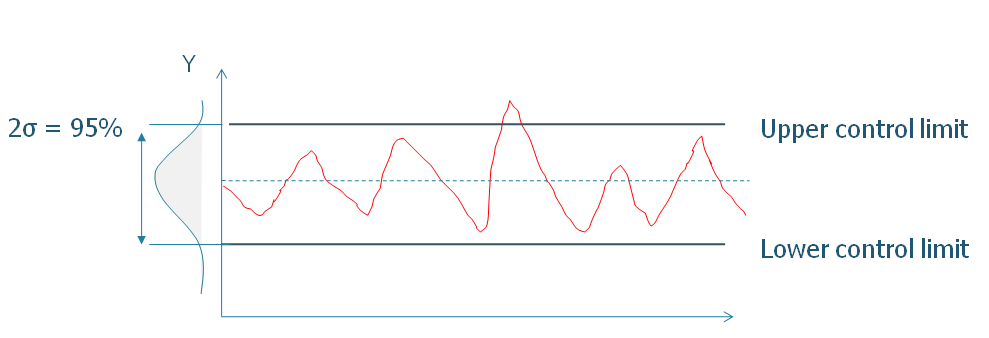

Since we will have noise in our data (unknown dependencies, unknown territory, etc.) we need to figure out when acting should take place. One way of doing this is by separating normal events from “unexpected” ones. By drawing a line one or two standard deviations off the mean we can identify special events since they are quite unlikely to be random.

By choosing control limits at two times the standard deviation of the mean we expect 95% of events to occur in this range (assuming a normal distribution). Is it fair to assume a normal distribution? This can be debated of course. But if we are analyzing lead time or any variable that can be called “the sum of things” a normal distribution is a good assumption. It’s simple, it’s natural (central limit theorem) and it treats deviations as unlikely. In brief – it’s allows us to reality check our outcome.

If you’re not a great fan of statistics there’s still good news. For quick calls, it’s often good enough just to eyeball the chart and ask yourself questions like – “where is this trending?”, “what’s happening to the variation?” and “what events seem normal?”

Regardless of what charting technique is used, finding the real culprit behind a change in the chart will be tricky. A chart holds limited knowledge. It can tell you something should be done, but unfortunately not what 🙂 To get this understanding, it’s vital to get out of the meeting room and talk with the people closest to the problem. That’s where you’ll find the most valuable information.

So why not just do trial and error? Aka skip the “go to problem”/learning part and just give things a try? Trial and error is fun (I know…) but it’s also uncontrolled. The risk is we introduce so much uncertainty and ambiguity that learning from it will be hard. Also, if there was something to learn, we wouldn’t know what to look for since we did not have an understanding of what outcome to look for before we ran the experiment.

Learning to do controlled experiments

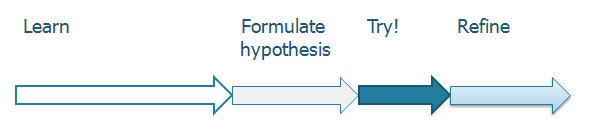

The steps in a controlled experiment

- Learn – Go to the problem. Understand it well enough to be able to take the next step, formulating a hypothesis.

- Formulate hypothesis – You have understood interactions well enough to be able to estimate effect on outcome by your actions.

- Try – Give it a shot!

- Refine – Few solutions are right the first try. Refine it based on the learning’s from the first attempt.

There is a big difference between simple “trial and error” and controlled experiments. In a controlled experiment we always start by studying the domain, learned enough to formulate a hypothesis.

A valid check for a hypothesis is that we have an idea of impact on one or more system outputs before we try the experiment.

How is this different from PDCA?

The Plan-Do-Check-Act cycle contains four steps:

- Plan – Identify and analyze the problem, suggest solution

- Do – Implement the solution

- Check – Gather data

- Act – Implement and standardize the solution

What PDCA does great is pointing out the need to scientifically approach the problem. But PDCA does not emphasize the importance of experimentation and innovation, two vital components in a dynamic and uncertain environment. In product development we get greater value out of experimentation, rather than planning, innovation rather than standardization.

Thus: we should not spend more time analyzing (plan) – then we need to formulate an hypothesis. At this point – experiment! Then – innovate, /try something different/ – over standardize (“Act”).

In software we have much to learn about using controlled experiments, rather than get bogged up in tool trench wars (pick your favorite Java vs. C# debate, Git over Subversion.. etc. ).

Challenge!

A couple of years ago I met with a tester working in a development team. Testing the work of the team typically took five days of their sprint and had been doing so for quite some time. While conversation with her, I suddenly asked

“Why can’t you complete testing in half a day?”

She looked at me puzzled. I continued,

“Well what if you wanted testing to take half a day, what would it take to make that happen?”

She replied:

“Yes… that would mean that our developers would need to have automated test cases for every story, we would also need a test environment that was stable and get rid of the two days it takes to upload production data in the test environment every sprint.”

I replied: “So why don’t we do that?”

Challenge is one of the most underused potentials in product development. We almost avoid challenge because of fear. Fear of failure, fear of changing roles, fear of letting go of existing solutions. But the horrible truth is that we are doing ourselves a great disservice. Paraphrasing Steve Jobs:

“If we don’t cannibalize our own business, someone else will do it for us”

The counter action to this is to encourage risk taking; ask and expect failure. Expect learning to happen all through your career. Expect roles to come and go. Take the long term by expect /and/ give your staff the possibilities to continuously learn new things.

To abandon old constraints, we need to imagine the impossible. This is where challenge comes in. Challenge is not working massive amount of overtime, no, it’s jiggling our minds, asking us to think as if we started from a blank sheet of paper. It’s always easier to accept constraints rather challenge them. This is normal because this is how our organization trains us.

To challenge, is the highest form of respect.

The importance of knowledge sharing

New insights are likely to emerge outside your domain (there are 8 billion people outside your company right?). So keeping a close relation to communities is a good idea. It helps you pick up new ideas and decrease the risk of dropping ideas “because it doesn’t fit your company/context/situation”. Keeping close ties to communities means getting a second chance to pick up ideas from companies that aren’t necessary playing by your rules. Remember, improvements happen by the rate through which ideas move.

Tying it together

With these ingredients at hand we can formulate an hypothesis for continuous improvement,

The Baseline – Challenge – Experiment loop, contains both new & validated learning

Baseline – chose a system output and learn to maintain its performance.

Challenge – Challenge existing solutions and constraints. Play with the impossible scenarios and see where it takes you. Then, quickly move to

Run a controlled experiment – Don’t get bogged down in lengthy discussions. Move forward! Do controlled experiments to produce validated learning.

“Experiments beats facts, facts beats emotion”

Happy improving!

– Mattias Skarin