Agile development with short release cycles have been here for a while now. Most of us want fast feedback loops and many even Continuous Delivery with changes in production software everyday. However, most of us also want secure software and the question is: Can security engineering keep up the pace? A fast feedback that your production website has been hacked is not so nice.

Security is a quality attribute of your software, just like performance. If you don’t want to be surprised by bad performance in production, what do you do? You test and design for it of course and you preferably do so continuously from the start.

In my experience, the same however cannot be said of security. It is very often relegated to a once a year penetration-test activity. Not really an agile way of working is it? Not a secure one either since untested software is released as often as everyday. There must be a better way of working which allows us to both work in an agile way and to verify security on the way.

In the security field people like Gary McGraw have long been advocating ways of “Building Security In”. The Microsoft MVP Troy Hunt also proposes that you should “Hack yourself first”, instead of just waiting for the pentesters. Shouldn’t it be possible to weave these security activities into the process the same way as it is possible with normal testing activities using TDD? Indeed I, as well others believe it is so. Let’s look at how small extensions to an agile process can work in this direction.

Extending Sprint planning to deal with security

To start off you must first know what the requirements are. In a normal agile project this is done by eliciting User Stories from the customer or the Product Owner.

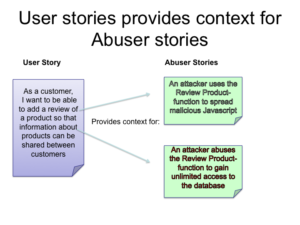

Let’s take an example of an online e-Commerce site. A User Story might be “As a customer I want to be able to add a review of a product so that information about products can be shared between customers”.

This works very well for traditional functional requirements, but for non-functional requirements a little extra thought is needed. In the case of security requirements it is often useful to state a requirement in a scenario that should NOT happen. In our case we shall call these scenarios “Abuser Stories”. These stories are non-technical descriptions of bad things you want to make sure you avoid. An Abuser story for this site might be:

“An attacker uses the Review Product-function to spread malicious Javascript”. Another might be: “An attacker abuses the Review Product-function to gain unlimited access to the database”.

A Product Owner might not be able to come up with these stories himself, but might need the help of a security engineer to help him with finding these threat scenarios.

These Abuser Stories and User Stories then go into the Sprint planning. Just like User Stories, not all Abuser Stories can fit in the Sprint. In the Abuser Story case however the decision that they should go into the sprint should depend on a risk analysis. High risk and high consequence gives a story priority. This prioritisation should preferably also be done with the help of a security engineer.

The requirements work is not done yet however. We need to derive potential Security User stories and Security test scenarios to make the mitigation of these stories concrete. This is a technical activity that should be done be security conscious developers and testers.

Deriving Security test scenarios from Abuser Stories

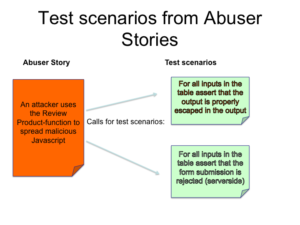

Given that we now have an identified and prioritised Abuser Story: “An attacker uses the Review Product-function to spread malicious Javascript” we now need to find test scenarios that can verify that controls are in place to mitigate this threat. This requires in-depth knowledge of web application security. Luckily there exists an excellent community resource in the form of the OWASP-project that can help us here. In my opinion all developers who build web applications should know about this project and specifically they should know about the OWASP top 10.

In this case our Abuser Story is an example of nr 3 on the list – A3 XSS – Cross-site scripting. OWASP also provides a cheatsheet with rules on how to mitigate this threat.

Let’s look at rule nr 1: “RULE #1 – HTML Escape Before Inserting Untrusted Data into HTML Element Content”. Can this be converted into a test scenario? One way of doing it would be to let the test enumerate “bad input” and check if the user review text is properly escaped in when outputted as HTML. This could be done in a data-driven test specification that looks like this:

“For all inputs in the table assert that the output is properly escaped in the output”.

Using good modern web frameworks help you with passing test since they escape data in HTML output by default.

OWASP also proposes a set of Proactive security controls that can help in this case.

Let’s look at control 4: “Validate All Inputs”. We can write a similar test that checks that proper validation is in place: “For all inputs in the table assert that the output is rejected (serverside)”. This test has characteristics of “Blacklisting” approach, but this does not mean that the implementation should use blacklisting, in fact it is far easier to implement by whitelisting. In this case a good implementation would be to only allow “common” text.

Validating that whitelisting is used is however better to do using a manual code review. This is illustrates the fact that it is not always possible to automatically test for all aspects security, but this does not mean that you shouldn’t try to catch as much as possible in an automated way.

There are a lot more tests that can be envisioned here, but these cover a lot and illustrate the point. Once written these tests should of course also go into the Continuous Integration Acceptance Test suite.

Automated tools for security testing

Fixing the application security problem is high on the agenda for many companies, but is harder to do in practice.

This has attracted a lot of tool vendors that claim they can find vulnerabilities automatically. Some of these tools are indeed quite good, but the fact is that modern web applications are quite complex very difficult to test. In many cases it is impossible to find bugs just by automated blackbox testing.

Static analysis can be more efficient, but the complexity of modern Javascript-applications present big challenges also for these advanced (and very expensive) tools.This means that just using automated testing tools can lead to a false sense of security. This is especially true of the open-source tools, such as FindBugs, w3af, and automated OWASP ZAP. This does not mean these tools are bad per-se, on the contrary, but one should keep in mind they mostly catch the “low-hanging” fruit (If you want further information of automating security scans I recommend Abhay Bhargav’s presentation at OWASP EU 2016.

Security tests as a Design tool

Writing Security test specifications also shares a positive effect of TDD: It acts as a design tool that will help developers produce a more secure solution. Just the fact of having the Abuser Story makes you think of security from the start and then half the match is already won. With some experience the effect can even be that the test seems redundant because the resulting implementation often becomes secure by design.

The limits of automated testing

Although testing is good, it has it’s limitations. A test can only prove the presence of a vulnerability, not the absence of it. Code review also has an important place in the process. A review can find bugs that the writer of the tests did not think of. The best way of finding vulnerabilities in my opinion is to combine code review with manual and automated testing and let’s admit it, penetration testers and security reviewers are really good at this. Automated tests raises the bar significantly for bugs to slip through though and this will enable testers to really focus on the difficult stuff.

References

- Gustav Boström, Jaana Wäyrynen, Marine Bodén, Konstantin Beznosov, Philippe Kruchten, Extending XP Practices to Support Security Requirements Engineering, Workshop proceedings of the International Conference on Software Engineering, Software Engineering for Secure Systems, Shanghai.

- Abhay Bhargav, AppSecEU 16 – – SecDevOps: A View from the Trenches, https://www.slideshare.net/abhaybhargav/owasp-appsec-eu-secdevops-a-view-from-the-trenches-abhay-bhargav

Great article with nice insights. In the follow up, I would like to see how to ensure that you have enough security-minded people on the team to do these practices effectively.

In my experience, only a handful of developers have enough security training or knowledge.

Thanks for your comment Vasilij!

I completely agree with you. That’s why most of my work in application security is related to Awareness training. That is the first step. In fact you have inspired me to a follow-up post on an informal “Application Security Maturity Model”. Stay tuned.

I love it. Great line of argumentation.

Hi Gustav, thank you for sharing. My team at Westpac, a bank in New Zealand, is also practicing STTD, but we decided to create use cases and abuse cases for each story instead of creating separate abuser stories. I’m sure you can appreciate that security isn’t something that should be up for debate in a bank, so threat modelling and writing automated security tests is part of my team’s definition of done. The most exciting thing about writing automated security tests first for us, is that it enables us to actually practice continuous delivery in a bank with confidence i.e. we know that if our code is good enough to make it to production via our deployment pipeline, then it’s fit for production.

Thanks for your comment Matthew! Nice to hear that your bank has good practices in place. In general businesses that are under regulatory control are a lot better when it comes to security.

I like the way you are using the Definition of Done also for security tests. That is the way to go I think. Having Abuser stories for each story is an interesting option. One possible drawback could be that they become too detailed and thus less interesting for the Product Owner. On the other hand you get very good security focus for each story. I guess it depends on the context which way is preferable.

Great article – keep up the good work.