Technical Debt is usually referred to as something Bad. One of my other articles The Solution to Technical Debt certainly implies that, and most other articles and books on the topic are all about how to get rid of technical debt.

But is debt always bad? When can debt be good? How can we use technical debt as tool, and distinguish between Good and Bad debt?

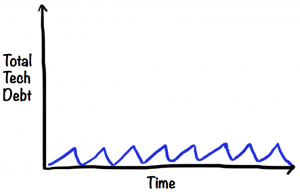

Exercise: Draw your technical debt curve

Think of technical debt as anything about your code that slows you down over the long term. Hard-to-read code, lack of test automation, duplication, tangled dependencies, etc.

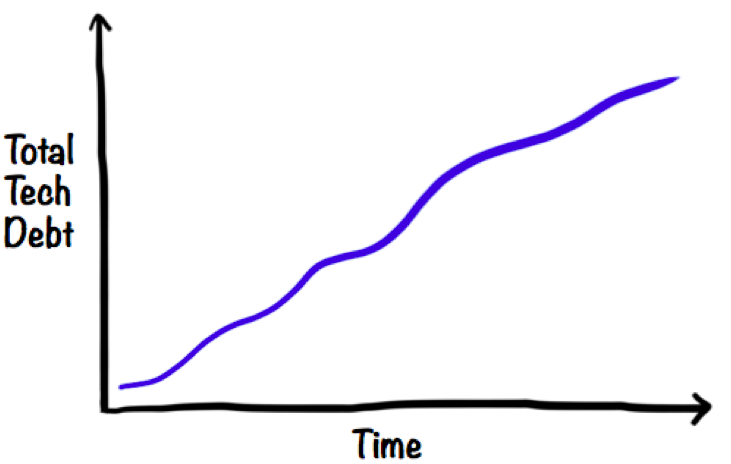

Now think of any system you’re working on. Grab a piece of paper and draw a technical debt graph over time. It’s hard to numerically measure technical debt, but you can think of it in relative terms – how is the relative amount of technical debt changing over time? Going up? Down? Stable?

Sadly, in most systems tech debt seems to continuously increase.

How do you WANT your debt curve to look?

Next question: if you could choose, in a perfect world, how would you like that curve to look like instead? Might sound like an obvious question, your spontaneous thought may be something “Zero tech debt! Yaay!”

Because, heck, didn’t I just say that technical debt is anything that slows you down? And who wants to be slowed down? If we could have zero technical debt throughout the whole product lifecycle, wouldn’t that be the best?

Actually, no. Having zero technical debt at all times will probably slow you down too. Remember, I said “Think of technical debt as anything about your code that slows you down over the long term”. The short term, however, is a different story.

Let’s talk about Good technical debt.

When is a mess Good?

Think of your computer and desk when you are in the middle of creating something. You probably have stuff all over the place, old coffee cups, pens and notes, and your computer has dozens of windows open. It’s a mess isn’t it?

Same thing with any creative situation – while cooking, you have ingredients and spices and utensils lying around. While recording music, you have instruments and cables and notes lying around. While painting, you have pens and paints and tools lying around.

Imagine if you had to keep your workspace clean all the time – every time you slice a vegetable, you have to clean and replace the knife. After each painting stroke, you have to clean and replace the brush. After each music take, you have to unplug the instrument and put it back in it’s case. This would slow you down and totally kill your creativity, flow, and productivity.

In fact, the “mess” is what allows you to maintain your state of flow – you have all your work materials right at your fingertips.

When is a mess Bad?

A fresh mess is not a problem. It’s the old mess that bites you.

If you open your computer to start on something new, and find that you still have dozens of windows and documents open from the thing you were working on yesterday, that will slow you down. Just like if you go to the kitchen to make dinner and find that the kitchen is clogged up with old dishes and leftovers from yesterday.

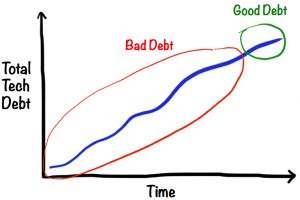

Same with technical debt. Generally speaking, old debt is bad and new debt is good.

If you are coding up a new feature, there are lots of different ways to do it. Somewhere there is probably a very simple elegant solution, but it’s really hard to figure it out upfront. It’s easier to experiment, play around, try some different approaches to the problem and see how they work.

Any technical debt accumulated during that process is “good debt”, since cleaning it up would restrict your creativity. In fact, somewhere inside the messy commented-out code from this morning you may discover the embryo to a really elegant solution to your problem, and if you had cleaned it up you would have lost it.

Another thing. Sometimes early user feedback is higher priority than technical quality. You’re worried that people might not want this feature at all, so you want to knock out a quick prototype to see if people get excited about it. If the feature turns out to be a keeper, you go back and clean up the code before moving on to the next feature.

The problem is, just like in the kitchen, we often forget to clean up before moving on. And that’s how technical debt goes bad. All that “temporary” experimental code, duplicated code, lack of test coverage – all that stuff will really slow you down later when you build the next feature.

So regardless of your reason for accumulating short-term debt, make sure you actually do pay it off quickly!

But wait. How short is “short term”?

When does Good Debt turn into Bad Debt

My experience is that, in software, the “good mess” is only good up to a few days, definitely less than a week. Then it starts going stale, dirty dishes clog up the kitchen, the leftovers start to stink, and both inspiration and productivity go downhill.

So it’s really important to break big features into smaller sub-features that can be completed in a few days. If you need practice doing that I can highly recommend the elephant carpaccio exercise.

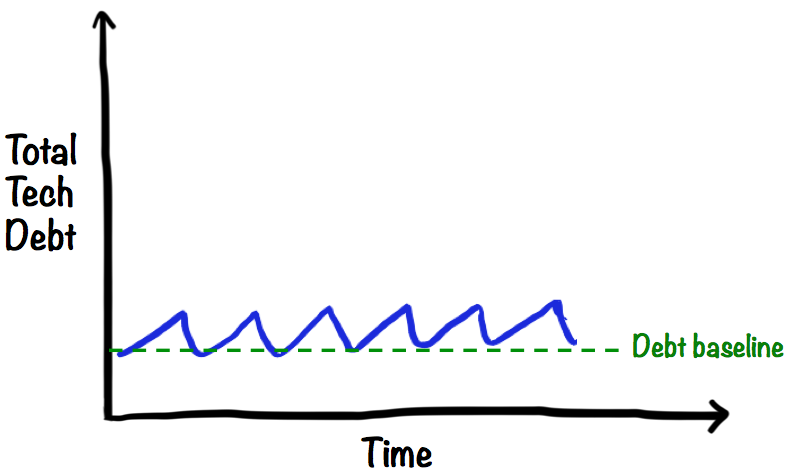

The ideal technical debt curve

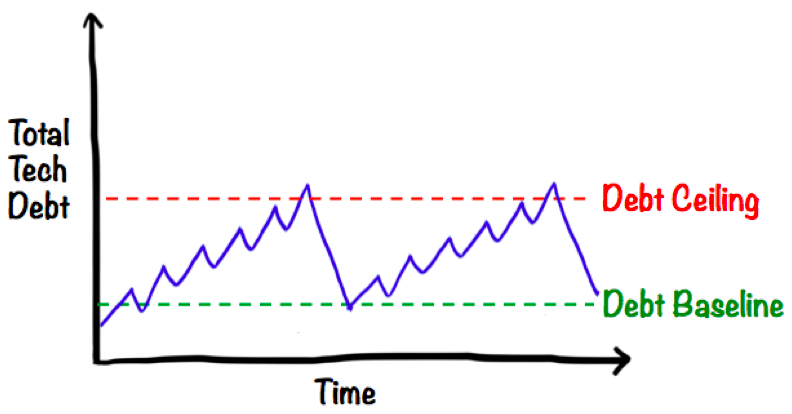

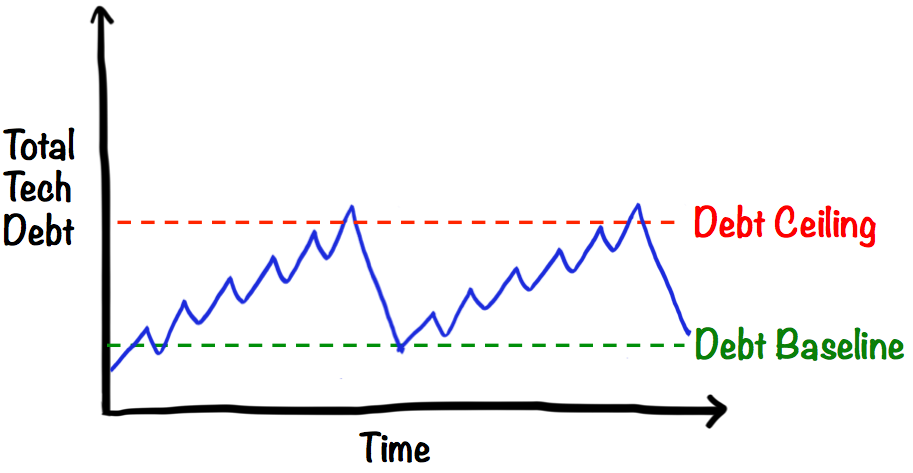

If new debt is good, and old debt is bad, then the ideal curve should look something like this, a sawtooth pattern where each cycle is just a day or two.

That is, I allow myself to make a temporary mess while implementing a new feature, but then make sure to clean it up before starting the next feature. Sounds sensible enough right?

Just like the kitchen; It’s OK to cause a creative mess while cooking, but clean it up right after the meal. That way you make space for the next creative mess.

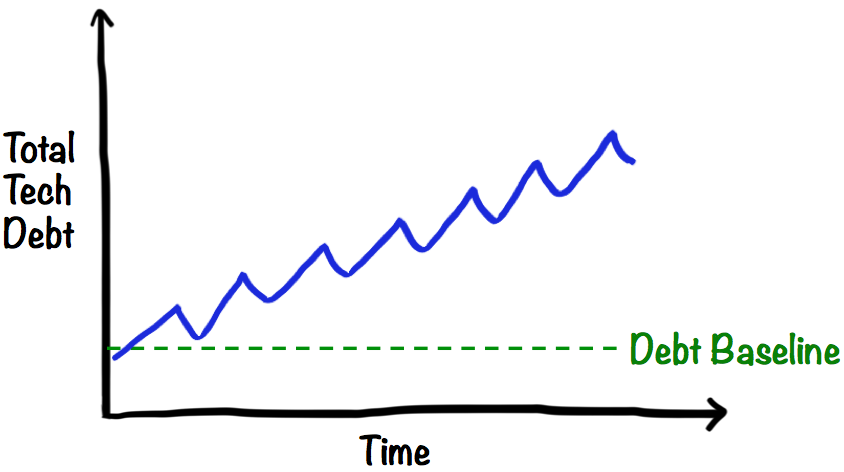

The more realistic ideal technical debt curve

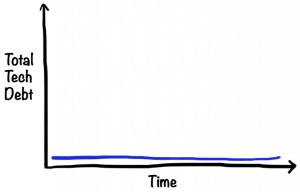

In theory, it would be great to get down to zero technical debt after each feature. In practice, there’s an 80/20 rule involved. It takes a reasonable amount of effort to keep the technical debt at a low level, but it takes an unreasonably high amount of effort to remove every last last crumb of technical debt.

So a more realistic ideal curve looks like this, with a baseline somewhere above zero (but not too far!).

That means our code is never perfect, but it’s always in good shape.

The “debt” metaphor works nicely because, just like in real life, most people do have some kind of financial debt (like a house mortgage) on a more or less permanent basis. It’s not all bad. As long as we can afford to pay the interest, and as long as the debt doesn’t grow out of control.

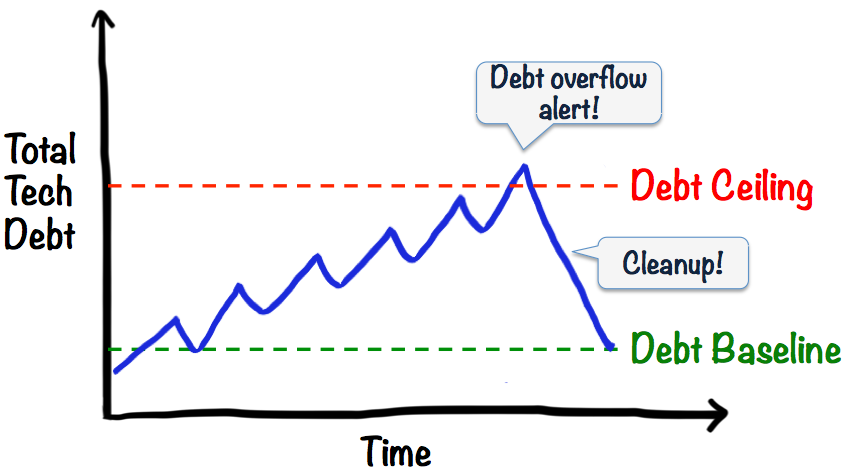

Have a debt ceiling. Just for in case.

Now, even if we do clean up after every feature, we are humans and are likely to accidentally leave small pieces of garbage here and there, and it will gradually accumulate over time. Like this:

So it best to introduce a “debt ceiling”. Just like certain governments….

When debt hits the ceiling, we declare “debt alert!”, the doors are closed, all new development stops, and everybody focuses on cleaning up the code until they’re all the way back down to the baseline.

The debt ceiling should be set high enough that we don’t hit it all the time, and low enough that we aren’t irrecoverably screwed by the time we hit it. Maybe something like this over a half-year period:

How to set the baseline and ceiling

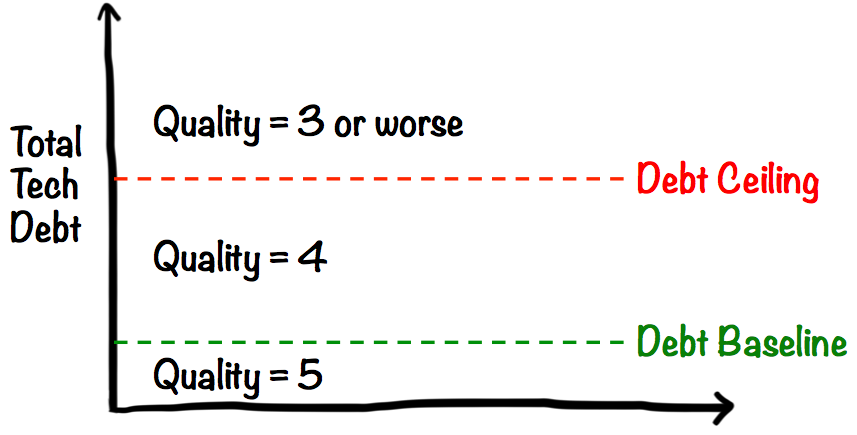

All this begs the questions “yes, but How?”. It may seem hard to quantify technical debt. But actually, it’s not. Anything can be measured, as long as it doesn’t have to be exact.

Just ask people on the team “How do we feel about the quality of our code?”. Pick any scale. I often use 1-5, where 5 is “beautiful, awesome code with zero technical debt”, and 1 is “a debt-riddled pile of crap”. With that scale, I would set the debt baseline to 4, and the debt ceiling to 3 (think of debt as the inverse of quality). That means quality will usually be 4, but if it hits 3 we will stop and yank it back up to 5.

Of all the possible metrics that can be used, I find that teams often like this subjective quality metric. It’s simple, and it visualizes something that most developers care deeply about – the quality of their code.

Other more objective metrics (such as test coverage, duplication, etc) can be used as input to the discussion. But at the end of the day, the developer’s subjective opinion is what counts.

Use debt ceiling to avoid a vicious cycle

The debt ceiling is very important! Because once your debt reaches a certain tipping point, the problem tends to spiral out of control, and most teams never manage to get it back down again. That applies to monetary debt as well. And governments…

One reason for this “tipping point” effect is the “broken window syndrome”. Developers tend to unconsciously adapt the quality of new code to the quality of the existing code. If the existing code is bad enough (many “broken windows”), new code tends to be just as bad or even worse – “oh that code is such a mess, I don’t know how to integrate with it so I’ll just add my new stuff here on top”.

Think of a kitchen, at home or at the office. If it’s clean, people are less likely to leave a dirty cup on the counter. If there are dirty cups everywhere, people are very much more likely to just add their own dirty cup on top. We are herd animals after all.

Make quality a conscious decision

My experience is that a quality level of 4 (out of 5) is a good-enough level of quality; clean enough to let the team move fast without stumbling over garbage, but not so overly clean that the team spends most of it’s time keeping it clean and arguing over code perfection details.

The key is to take a stand on quality. Regardless of how you measure quality, or where you place your debt baseline and ceiling, it’s very valuable to discuss this on a regular basis and make an explicit decision about where you want to be.

How Definition of Done helps

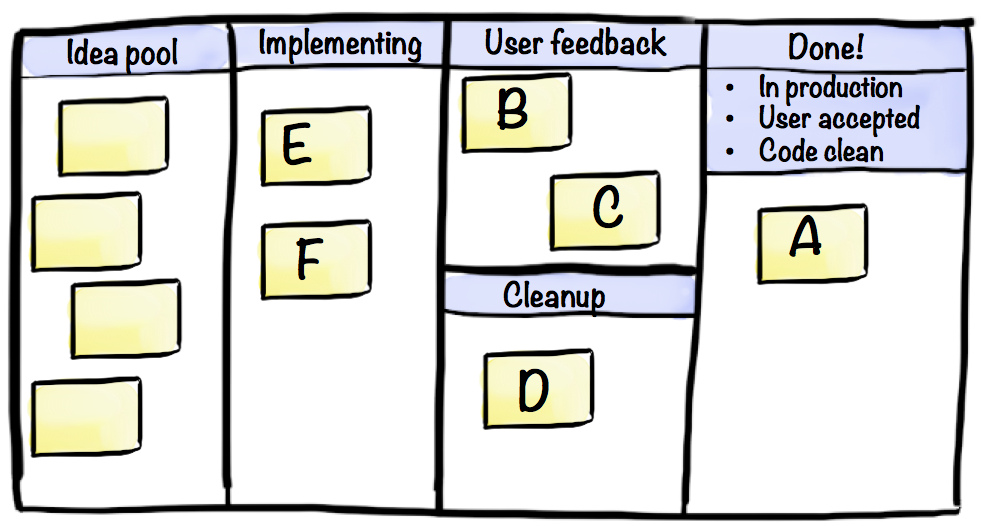

“Definition of Done” is a useful concept for keeping tabs on technical debt. For example, your Definition of Done for a feature could be:

- Code clean

- In production

- User accepted

These are in no particular sequence, as that will vary from feature to feature. Every sequence has it’s advantages and disadvantages. Sometimes we’ll want to put something in production first, then get user feedback, then clean up. Sometimes we’ll want to clean up first, then get user feedback, then put in production. But the feature isn’t Done until all three things have been done.

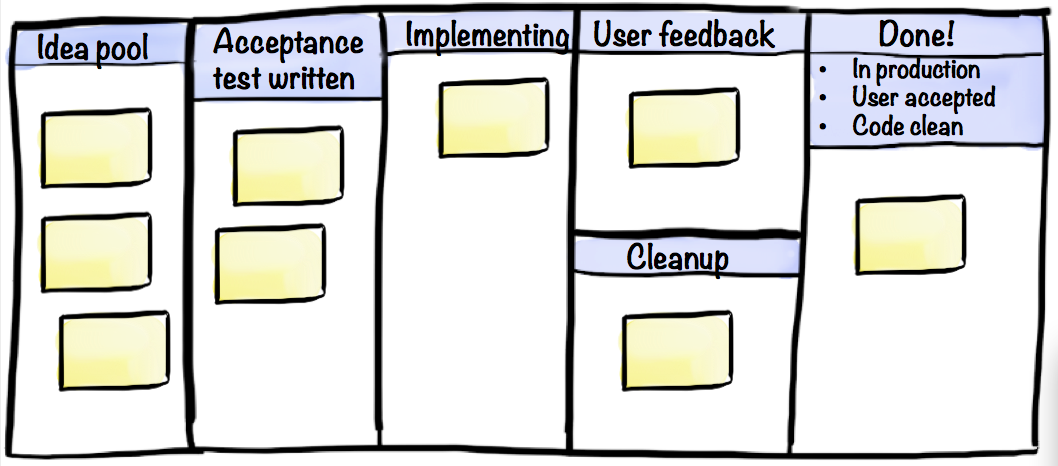

Here’s a sample board to visualize features flowing through this process.

- Feature A: All done. It’s in production, the code is clean, and the user has given thumbs up.

- Feature B & C: Current focus is getting user feedback. B has already been cleaned, C has not.

- Feature D: Current focus is cleaning up the code. User has tried it and given thumbs up.

- Feature E & F: Currently being developed, trying to quickly get to a point where we can get user feedback.

- The rest of the features are in the idea pool (most teams call that a “backlog” but I prefer the term “idea pool”).

How TDD helps

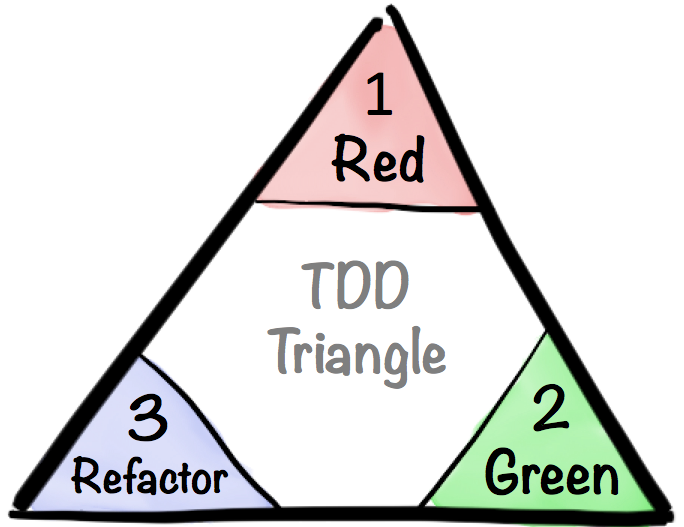

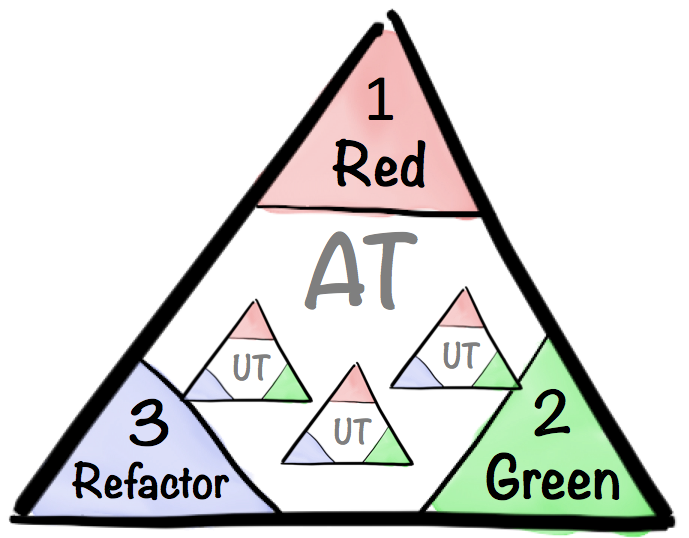

Acceptance Test-driven development is a really effective way to keep the code clean while still enabling experimentation and creativity.

All features are developed in three distinct steps:

First step is to write a failing (“red”) acceptance test. When doing that, we focus exclusively on the question “what does this feature aim to achieve, and how will I know when it works?”. We’re setting a very clear goal and embedding it in code, so at this point we don’t care about quality, or how the feature will be implemented.

Second step is to implement the actual feature. We know when we’re done because the executable acceptance test will go from red to green. We’re looking for the fastest path to the goal, not necessarily the best path. So it’s perfectly fine to write ugly hacks and make a creative mess at this point – ignore quality and just get to green as quickly as possible.

Third step is to clean up. Now we have a working feature and a green test to prove it. We can now clean up the code, and even drastically redesign it, because the running tests (not just the newest test) act as a safety harness to alert us if we’ve broken or changed anything.

This process ensures that we don’t forget the purpose of the feature (since it forces us to write an executable acceptance test from the beginning), and that we don’t forget to clean up before moving on to the next feature.

To emphasize this process, we can update the board with “acceptance test written” column, just to make sure that we don’t start implementing a feature before we have a failing acceptance test.

The acceptance test doesn’t necessarily have to be expressed at a feature level (“if we do X, then Y should happen”). Some alternatives:

- Lean Startup style acceptance test: “we have validated or invalidated that users are willing to pay for premium accounts”. Maybe rename the “User feedback” column to “Validating assumption”.

- Impact Mapping style acceptance test “the feature is done when it increases user activation rate by 10%”. Maybe rename the “User feedback” column to “Validating impact”.

Either way, we just need to make sure cleanup is part of the process somewhere.

TDD can be done at multiple levels – at a feature level (acceptance tests) as well as at a class or module level (unit tests). Think of the unit tests as a bunch of triangles inside the acceptance test triangle. One loop around the big triangle involves a bunch of smaller loops inside.

After each unit test goes green, do minor cleanup around that. When the acceptance test goes green, do a bigger cleanup. Then move on to the next feature.

The key point is that each corner of the TDD triangle comes with a different mindset, and each one is important.

Good quality = happier people

At the end of the day, technical debt is not about technology. It’s about people.

A clean code base is not only faster to work with, it is more fun (or less annoying, if you prefer seeing things that way…). And motivated developers tend to create better products faster, which in turn makes both customers and developers happier. A nice positive cycle 🙂

Nice article. Comparing technical debt with the mess in the kitchen was a great analogy.

I think this came from uncle Bob Martins Clean Code?

Accidental in that case. I haven’t read the book (it’s on my stuff-I-hope-to-read-someday list).

Very good article thanks !

A step ahead of existing practices

Excellent explanation of technical debt for everyone to understand! From Devs right through to the CEO. It’s comforting to see some others have a grip on what technical debt means.

Hi, Thanks for sharing your thoughts around TDD approach, moreover I liked the way you illustrated the entire concept with good examples. But yet another aspect which can be added to this thought would be, when your technical debt is increasing and touching the roof top, there is a need to do the retrospection and put all checks and balances to see how we can one bring down the scale to quality level 4/5.

Hi, and thanks for a good and interesting read.

I regularly use this analogy when describing situations where teams need to make short-term decisions that in one way or another might cause problems in the longer run. Most often used when we are pretty sure about the long-term problems. This can be both bad and good, and as I understand it the «debt» comparison is related to whether or not teams are able to fix the long-term situation at one point or another. It could get progressively harder (and more costly) to remedy this, thus the analogy to debt (more specifically: interest).

Although I am a firm believer in the analogy of debt, when borrowing a financial term to create a useful analogy it is quite interesting to also consider that domain when looking at what “good debt” could be. The problem is that in finance, “good debt” is a term used by the lending side, not the spending. Basically it means debt your client can handle sustainably (making their down payments). “Bad debt” is obviously when they are incapable of handling this. Also, the concept of «security» is not clear in the analogy, most likely the security is in the investment itself.

Let me elaborate:

In most financial situations debt (or credit) relates to the desire to trade future, longer-term income with short term investment. Usually one that cannot be done within the existing cash-flow limits. The difference between “good” and “bad” here is irrelevant because it just “is”. It is an instrument. The “good” or “bad” is an attribute that relates to how the debt is handled, not the actual debt itself (which could be put to very good use even though you cannot make your down payments). Because it is an instrument that has a corresponding value (or security) «bad debt» can lead to taking ownership of the security.

So, translated to technology that would mean that “debt” could be situations where it is desirable to trade future technology budgets into short-term investments. This could mean not being able to do normal housekeeping tasks, maintenance, new development and refactoring that are in future budgets, and trading that for lets say establishing a new big data platform right now or doing a major quick-fix.

Unless you are coming into new money, debt is just moving and focusing the same money (keeping interest out of the analogy for now). So unless it is used for an investment that will explicitly pay off enough to return to “normal”, it means you are saying “no” to other things, permanently, like in the example above.

Keeping with the analogy, since few of us are coming into new money or resources suddenly we will have to stick with the view that the money or resources is spent on an investment that will pay off enough to get back on track at some point.

So «bad» technology debt would be fitting to situations where we are spending future resources on something that will not pay off (or not enough). The end state could be that we actually lose the investment AND have no budgets going forward.

«Good» technology debt is spent on something that pays off (frees time, causes greater income, less cost), so that we can return to normal.

In this case, there is no good case for when «bad» technology debt can be good (it is not), but debt itself isn’t bad or good, just as long as the down payments are handled sustainably.

i’m not really happy with the way that the “technical debt” term has evolved from what Ward originally said, even though I may be part of that evolution.

i wish the kanban clearly showed ready to view vs clean. couldn’t tell from pic that B was clean and C not. or was it the other way?

i find that skilled programmers can keep debt low while going fast — often low enough.

i suspect it takes a very mature and very trusted team to repair TD once it hits the threshold. i suspect this is a Very Advanced Technique … but if the team is advanced do they need it?

Will we become tolerant of bad code so that our “3” becomes worse and worse?

Very nice. These are just questions and comments.

Hi Ron, thanks for sharing your thoughts on this.

As for the kanban board, it shows the current focus for each feature, not the state or history. For features B & C we are primarily focusing on user feedback. For C we are mainly focusing on cleanup. I wanted to illustrate that the order between those two activities will vary form case to case, sometimes it makes sense to skip quality and put something in front of users quickly, sometimes it makes sense to go clean before going to the user. A feature may bounce back and forther between these states.

If they need to track the current state more clearly, the team can mark on the feature card when it is clean, or when the user has accepted it.

Nice article! I’m also a big fan of Acceptance Test-driven development. If anyone is interested in ATDD for web apps, they should check out Helium (http://heliumhq.com). (I’m one of the cofounders). It lets you write executable test scripts of the form

startChrome();

goTo(“Google.com”);

write(“Helium”);

press(ENTER);

click(“Helium – Wikipedia”);

assert Text(“Helium is the second lightest element”).exists();

This is Java, Python bindings are also available. Note how no reference to any HTML source code properties is required (thus the tests can be written in a TDD style, before the implementation).

I should also point out that Helium is a commercial product, so you (or, more frequently, your employer) has to pay to use it.

I like how you’ve applied this to the development lifecycle and the need to cleanup. Your comments compliment a paper I’ve written on technical debt of systems and managing that within an organisation. http://bciadvisory.blogspot.com.au/2014/02/hidden-cost-of-technical-debt.html

Thx for sharing!

“Imagine if you had to keep your workspace clean all the time – every time you slice a vegetable, you have to clean and replace the knife. ”

cf “Working Clean” — critical Chef skill.

thank you for good article…

Thank you. Excellent read.

Thanks a lot, this is very interesting. It’s a lot like Kent Beck’s concept of 3X (eXplore, eXpand & eXtract). Everything we create goes through an initial eXploration phase, were we need to experiment and try out things before we find the solution and the problem. It makes sense to take technical debt during the eXplore phase, to pay it back in the final eXtract phase.

It’s fun to see that Kent Beck was the inventor of both TDD and 3X.

Time and time again I find myself coming back to this article and re-sharing it with teams I am on