In the pursuit to automate testing to create faster feedback loops and build quality in, some teams forget the value of manual testing. In my experience, without manual testing (as well) we are toast.

In the pursuit to automate testing to create faster feedback loops and build quality in, some teams forget the value of manual testing. In my experience, without manual testing (as well) we are toast.

Security Test-Driven Development – Spreading the STDD-virus

Agile development with short release cycles have been here for a while now. Most of us want fast feedback loops and many even Continuous Delivery with changes in production software everyday. However, most of us also want secure software and the question is: Can security engineering keep up the pace? A fast feedback that your production website has been hacked is not so nice.

Security is a quality attribute of your software, just like performance. If you don’t want to be surprised by bad performance in production, what do you do? You test and design for it of course and you preferably do so continuously from the start.

In my experience, the same however cannot be said of security. It is very often relegated to a once a year penetration-test activity. Not really an agile way of working is it? Not a secure one either since untested software is released as often as everyday. There must be a better way of working which allows us to both work in an agile way and to verify security on the way.

In the security field people like Gary McGraw have long been advocating ways of “Building Security In”. The Microsoft MVP Troy Hunt also proposes that you should “Hack yourself first”, instead of just waiting for the pentesters. Shouldn’t it be possible to weave these security activities into the process the same way as it is possible with normal testing activities using TDD? Indeed I, as well others believe it is so. Let’s look at how small extensions to an agile process can work in this direction.

Extending Sprint planning to deal with security

To start off you must first know what the requirements are. In a normal agile project this is done by eliciting User Stories from the customer or the Product Owner.

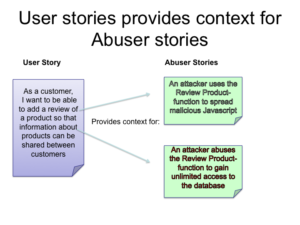

Let’s take an example of an online e-Commerce site. A User Story might be “As a customer I want to be able to add a review of a product so that information about products can be shared between customers”.

This works very well for traditional functional requirements, but for non-functional requirements a little extra thought is needed. In the case of security requirements it is often useful to state a requirement in a scenario that should NOT happen. In our case we shall call these scenarios “Abuser Stories”. These stories are non-technical descriptions of bad things you want to make sure you avoid. An Abuser story for this site might be:

“An attacker uses the Review Product-function to spread malicious Javascript”. Another might be: “An attacker abuses the Review Product-function to gain unlimited access to the database”.

A Product Owner might not be able to come up with these stories himself, but might need the help of a security engineer to help him with finding these threat scenarios.

What is an Agile Tester? Slides from my Sri Lanka talk.

Here are the slides for my talk What is an agile tester from the Colombo Agile Conference in Sri Lanka.

Stop-the-line spoken word performance

På Agila Sverige 2012 höll jag min första ignite i form av en Majakovski- och Bob Dylan-inspirerad Spoken World performance om hur vi på Polopoly skapade kvalitet genom extremt fokus på automatiserade tester och en stoppa-bandet-kultur. Förra veckan fyllde konsultbolaget Adaptiv 5 år och firade genom att låte en utvald skara Agila Sverige-talare reprisera sina

Continue readingInterview with Peter Antman on software development

In May this year I was interviewed at the SmartBear MeetUI user conference in Stockholm about my background as a journalist, Linux and open source, software development in general and testing more specifically. Here’s a post containing the video and interviews with the other speakers at the conference. Interview with Peter Antman

Continue readingStill not automating tests? Here’s why you should (again)!

The other day I read a blog by Uncle Bob. It more or less stated that no matter what situation you are in, writing automated tests will make you go faster. Ok, this is old news I thought, until I checked Uncle Bob’s tweets. A fair amount of people argued against this statement, and that surprised me!

So I started thinking about why there still are fellow software developers that doesn’t believe in automated testing? Have they not seen them in action and understood what they are for? Please, gather around the campfire, and I will tell you one, just one, of my war stories, and then I will tell you why also you should write automated tests!

Always Fix Broken Windows

I keep a close watch on these tests of mine I keep my Jenkins open all the time I see a defect coming down the line Because you’re mine, I stop the line A zero bug policy is the only valid way to look at quality, just like there should never be any broken windows

Continue readingStop the Line – Build Quality In with Incremental Compilation

We in the software industry are still far behind when it comes to automated quality checks. Toyoda Sakichi for example invented the automated loom with stop the line capability almost 100 years ago. I write more about that in my first blog in a three-part series on building the quality in on the SmartBear blog.

Continue readingCountry Ambassador for Agile Testing Days 2012

A while ago I was asked to become one of the Swedish country ambassadors for the Agile Testing Days 2012 conference. I said yes, because I think it’s a great conference. As country ambassador, I help in promoting the conference. I chose to do it, because I think it’s a good conference and I already recommend it to my friends.

Continue reading

How to catch up on test automation

Many companies with existing legacy code bases bump into a huge impediment when they want to get agile: lack of test automation.

This article illustrates how to address this problem by creating a test automation backlog and implementing a few tests each sprint.

| Test case | Risk |

Manual test cost (man-hours) |

Automation cost (story points) |

| Block account | high | 5 hrs | 0.5 sp |

| Validate transfer | high | 3 hrs | 5 sp |

| See transaction history | medium | 3 hrs | 1 sp |

| Sort query results | medium | 2 hrs | 8 sp |

| Deposit cash | high | 1.5 hr | 1 sp |

| Security alert | high | 1 hr | 13 sp |

| Add new user | low | 0.5 hr | 3 sp |

| Change skin | low | 0.5 hr | 20 sp |

Manifest for the Agile Tester

I recently spoke at a conference for testers arranged by SAST (Swedish Association of Software Testers) on the topic of Agile software development. Over 150 testers turned up, breaking all previous records for that association! I’m glad to see that you testers are interested in this stuff!

Anyway, trying to figure out a good opening statement for this conference I found the following angle that I’m pretty happy with afterwards, in a smug sort of way :o)

Continue reading